I published the first installment of On Coding on September 11, 2016. It seems a bit surreal that this thread is over a year old — but all good things must come to an end. I really enjoyed writing these posts; they helped me organize my thoughts about my own personal coding history.

But there are some loose ends I’d like to tie up. I’ve had brief forays into other languages — too brief to devote an entire post to any one of them. I’ve mentioned a few of them here and there, but I’d like to take the opportunity to include them all in one post.

Before doing so, I should mention that Processing is my most recent programming adventure. But I have written so much about using Processing in the context of my digital art course, I don’t feel the need to devote a separate post to it. And I feel I might bore you, dear reader….

The first is PASCAL, which I first learned while taking an undergraduate programming class. Other than the course, I only did a few other things with PASCAL. I did write a routine that printed out 4 x 4 magic squares — I was really into magic squares back in college. I also dabbled with computer graphics and geometrical inversion — I recall giving a talk where I discussed geometrical inversion, and I split the screen so on one side you saw lines tangent to a conic section, while on the other side, you saw inverse circles tangent to the inverse limaçon.

While in graduate school, I had a one-year stint filling in for a faculty member at a nearby college, and I taught a data structures course using PASCAL. Now, I would never think of PASCAL as a go-to language for any particular purpose. It’s still around, but not nearly as popular as it was then.

The next is LISP*, which I used while teaching at a summer program during graduate school. This was a parallel version of LISP written for the Connection Machine, a state-of-the-art supercomputer in its day. It had literally thousands of different processors, each very simple. But because of the sheer number of processors, the speed of the Connection Machine merited it the designation “supercomputer.”

The language was quite similar to LISP, except that many functions had parallel versions which could be executed on each processor. I can’t recall much of what I did on the Connection Machine, but I do remember programming Conway’s Game of Life on a 1000 x 1000 torus. Of course I can do that on my laptop right now in Mathematica, but at the time, it was a real feat! Remember, that was back in the day when Mandelbrot sets were calculated pixel by pixel and computers were so slow you could actually see the pixels march right on by….

Chronologically, Maple — a direct competitor of Mathematica — comes next, sort of…I used Maple around the same time as LISP*. I seem to recall the only reason I played around with it was that I had a house-sitting job for a few summers, and there was a computer I could use which had Maple on it.

I recall finding Maple really useful, but there was a bit more syntax than with Mathematica. I really like Mathematica‘s fundamental data type — the list, just like LISP. But I think part of the reason I’ve stuck with Mathematica through the years is that most places I’ve been have supported it — and being free is a big advantage. I know Maple has changed a lot since I last used it, so I don’t feel I’m able to say more about a comparison with Mathematica than what I’ve already said.

Jump ahead a few years to C++. I can’t remember exactly when I started learning it, but I was teaching at a small liberal arts college, and a colleague who usually taught an algorithms course was going on sabbatical. I was really the only other faculty member at the university qualified to teach it, and so there it was! The students in the course knew C++, so I needed to learn it, too….

Well, I should say I learned enough to write code for an introductory algorithms course, which means I didn’t have to dive too deeply in. I can’t say I used C++ for much after I taught the course, except I remember writing some routines to do financial planning. You know, like if you have different investments at different interest rates for different numbers of years, etc., how much will you have when you retire? (Being a mathematician, I thought it insane that I should pay someone else to do my financial planning, so I read everything I could on the topic and did it myself.) But I haven’t used C++ since.

Last — and perhaps least — there’s Java. I learned Java was when I was applying for a job teaching mathematics and computer science, and the language taught was Java. I had never written a line in Java before, and there was some concern that I wasn’t qualified.

So I wrote a little interactive game based on some puzzles I created, which was really quite nice, if I must say. The only way to show you know how to code in a language is to code, so I did it.

It was rather unpleasant, though. What I needed to do was really just algorithmic, and the object-orientedness of Java and the need to precisely define data structures really got in the way. I hope never to write another line of Java again. (Incidentally — long story — I was offered the job but turned it down. Saved me from teaching Java….)

And I think that’s about it! If I can leave you with anything after this series, it’s GO CODE! Learn as many different types of languages as you can. The best way to learn is to find something you really want to do, and then go for it.

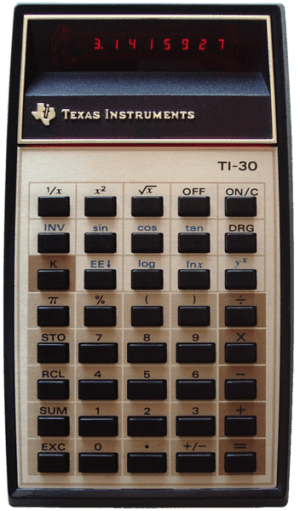

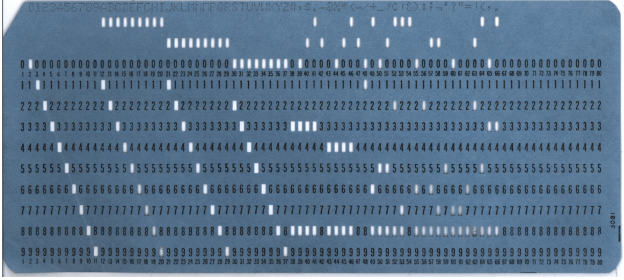

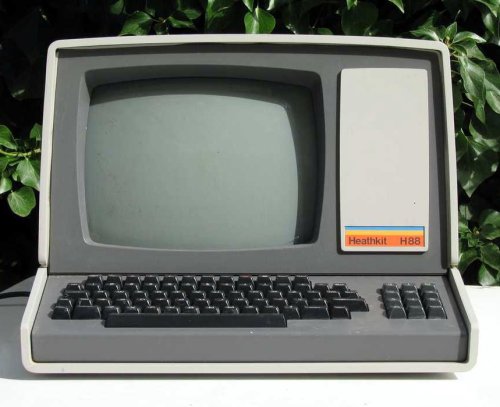

Remember, my first programming language was Fortran — using punch cards. Running batch jobs. Today, you’ve got laptops and the internet. The only limit is your imagination….