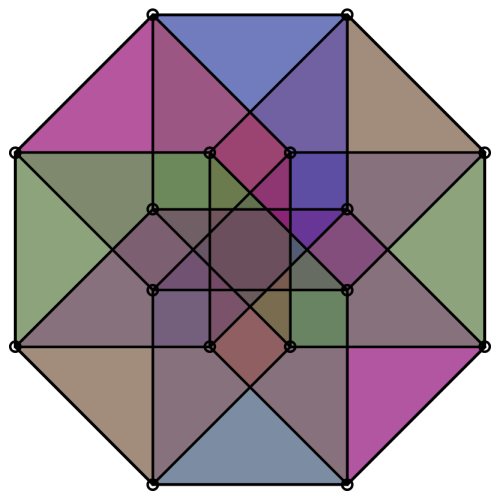

In my last post, I talked about a textbook I had written to use in a course which, among other things, introduced students to a non-Euclidean geometry — spherical geometry. And while the primary purpose of the text was for teaching a college-level course, the actual content of the text had a different origin.

As I have mentioned previously, I got interested in polyhedra during graduate school, and was very fond of taking books about polyhedron models out of our mathematics library at Carnegie Mellon. It was easy enough to photocopy the nets provided, or use the numerical data to construct nets on my own.

But what was missing for me, a mathematician-in-training, was a more rigorous discussion of these intriguing models. For example, Wenninger’s Polyhedron Models contains many nets with some idea of how to construct them from a more general geometric point of view, but few details. And his Spherical Models contains many tables of data of angular measures to three decimal places, but many derivations are missing. Other books included metrical data such as the measures of dihedral and edge angles and circumradii, but again, often only numerically.

I should point out that this is not necessarily a criticism of these wonderful books, since each book had its own purpose. What I found, though, was that there was really no book out there which took a more mathematically sophisticated viewpoint at an intermediate level.

So I decided that if I wanted to know more about polyhedra — that is, know the exact metrical data associated with polyhedra, not just numerical approximations — I needed to do some work on my own.

I used two approaches — coordinates and linear algebra, and spherical trigonometry. In both cases, I wanted precise results — so typically, this meant knowing exact values for the cosines of dihedral and edges angles, for example. When you know the cosine of an angle, you know the angle.

Why the cosine? The sine has the disadvantage of being ambiguous, since many dihedral angles of polyhedra are obtuse — so you need more information than just the sine of the angle. Using the tangent involved the troublesome case of in formulas — which occurred rarely, but was still a nuisance. Some sources use the tangent of the half-angle, but this often involves additional calculations.

For my geometry course, I focused on spherical trigonometry — recall that I did not want linear algebra as a prerequisite for the course so that it would be accessible to a broader student demographic. But I still wanted to keep the mathematical rigor — use spherical trigonometry to calculate exact values of the cosines of relevant angles.

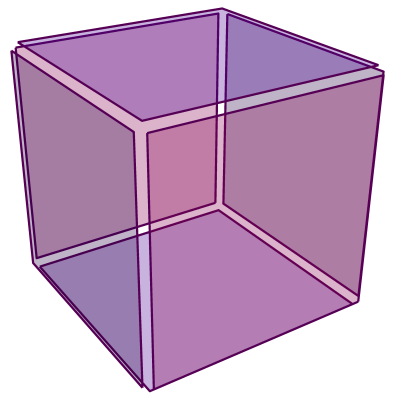

To give you an idea of what’s involved, let’s calculate the dihedral angle of a dodecahedron — that is, the angle between any two pentagonal faces of a regular dodecahedron.

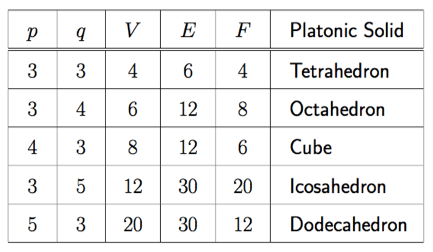

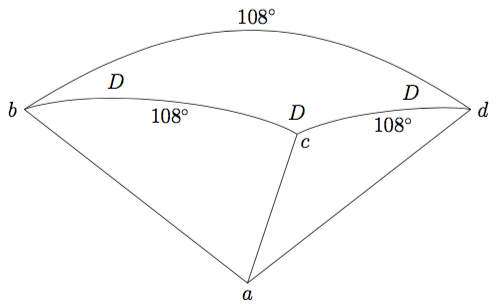

To use spherical trigonometry (see a previous post on spherical geometry for a refresher), we imagine a small sphere centered at a vertex of the dodecahedron — it will carve out a spherical triangle, as shown below.

Here, a is a vertex of the dodecahedron, and points b, c, and d are the points where the small sphere intersects the edges of the dodecahedron meeting at a. This creates a spherical triangle whose sides all have measure 108°, since the interior angles of a regular pentagon all have measure 108°.

The angles between the sides of the spherical triangle with vertices a, b, and c are the dihedral angles of the dodecahedron, whose measure we call D. We may apply the law of cosines for spherical triangles in order to find D (see the relevant Wikipedia page; in the textbook, spherical trigonometric formulas are discussed in detail):

To find we need to know the following (also explained in the text):

where is the golden ratio. (I use

for the golden ratio as this is the notation Coxeter used in his well-known Regular Polytopes, which inspired generations of polyhedral enthusiasts.)

Solving yields

so that D is approximately 116.6°.

Now this is not the only way to find D; it is possible to find Cartesian coordinates for the vertices of the dodecahedron and use some linear algebra, for example. But using spherical trigonometry is straightforward and elegant — and is surprisingly versatile.

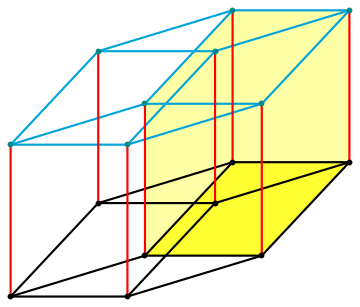

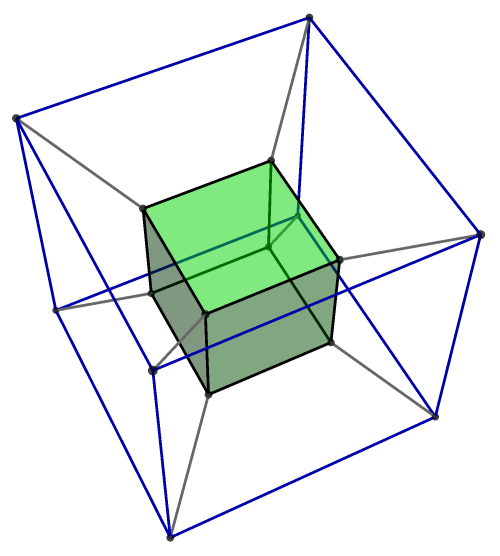

When I teach my polyhedra course, we use spherical trigonometry to find edge and dihedral angles of all the Platonic and Archimedean solids. Further, we use spherical trigonometry to design geodesic models, like the 4-frequency icosahedron shown here.

So the emphasis is on applying spherical trigonometry to the construction of physical models. In a typical two-lecture-per-week course, one lecture is always a hands-on laboratory, where we build a polyhedron or geodesic model we studied previously. We never build a model without knowing precisely how it is designed — we first understand the mathematics of the model, and then we build it. Included in the text is a week-by-week outline of how I’ve used the text in the classroom.

Now the classroom textbook is just Part I of the book. Part II is a bit more technical, and intended for the true polyhedral enthusiast. It turns out that spherical trigonometry is a powerful tool for studying polyhedra. In fact, the dihedral angles of all the uniform polyhedra may be calculated using spherical trigonometry — even the most complex snub polyhedra. However, it is sometimes necessary to solve sixth-degree polynomials in order to do so!

I thought to add Part II to the volume as I had already done all the calculations some years ago. And, to my knowledge, the calculation of the dihedral angles of all the uniform polyhedra using spherical trigonometry has not been published before. So I hope to contribute something to the literature of classical polyhedral geometry by publishing this book, in a way that someone with a modest mathematical background can understand.

As I continue with the book project, I’ll post updates as I discover interesting geometrical tidbits along the way!