Now on to some calculus involving hyperbolic trigonometry! Today, we’ll look at trigonometric substitutions involving hyperbolic functions.

Let’s start with a typical example:

The usual technique involving circular trigonometric functions is to put so that

and the integral transforms to

In general, we note that when taking square roots, a negative sign is sometimes needed if the limits of the integral demand it.

This integral requires integration by parts, and ultimately evaluating the integral

And how is this done? I shudder when calculus textbooks write

How does one motivate that “trick” to aspiring calculus students? Of course the textbooks never do.

Now let’s see how to approach the original integral using a hyperbolic substitution. We substitute so that

and

Note well that taking the positive square root is always correct, since

is always positive!

This results in the integral

which is quite simple to evaluate:

Now and

Recall from last week that we derived an explicit formula for and so our integral finally becomes

You likely noticed that using a hyperbolic substitution is no more complicated than using the circular substitution What this means is — no need to ever integrate

again! Frankly, I no longer teach integrals involving and

which involve integration by parts. Simply put, it is not a good use of time. I think it is far better to introduce students to hyperbolic trigonometric substitution.

Now let’s take a look at the integral

The usual technique? Substitute and transform the integral into

Sigh. Those irksome tangents and secants. A messy integration by parts again.

But not so using We get

and

(here, a negative square root may be necessary).

We rewrite as

This results in

All we need now is a formula for which may be found using the same technique we used last week for

Thus, our integral evaluates to

We remark that the integral

is easily evaluated using the substitution Thus, integrals of the forms

and

may be computed by using the substitutions

and

respectively. It bears repeating: no more integrals involving powers of tangents and secants!

One of the neatest applications of hyperbolic trigonometric substitution is using it to find

without resorting to a completely unmotivated trick. Yes, I saved the best for last….

So how do we proceed? Let’s think by analogy. Why did the substitution work above? For the same reason

works: we can simplify

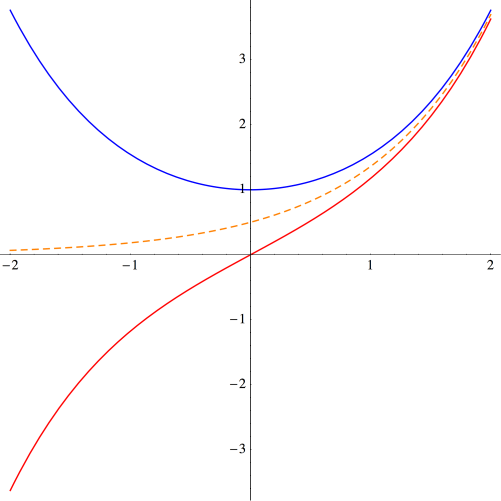

using one of the following two identities:

So is playing the role of

and

is playing the role of

What does that suggest? Try using the substitution

!

No, it’s not the first think you’d think of, but it makes sense. Comparing the use of circular and hyperbolic trigonometric substitutions, the analogy is fairly straightforward, in my opinion. There’s much more motivation here than in calculus textbooks.

So with we have

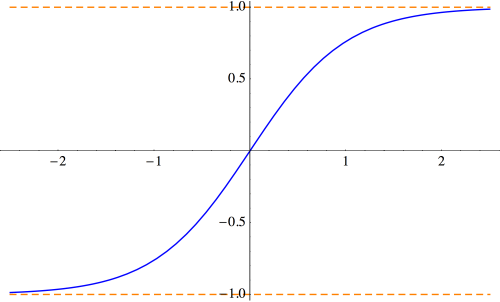

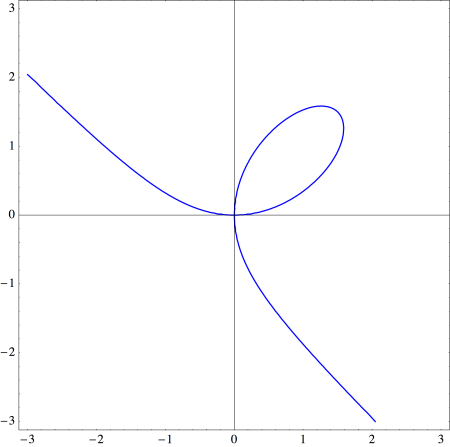

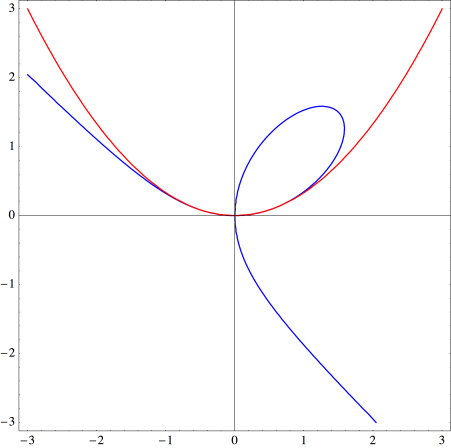

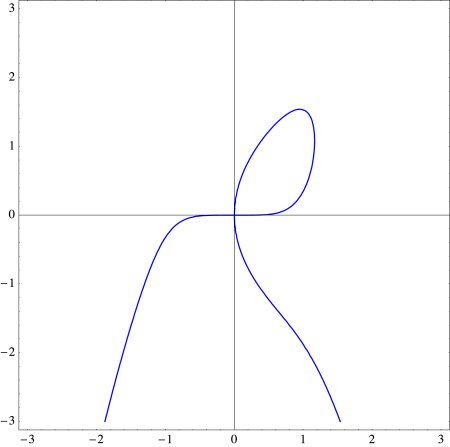

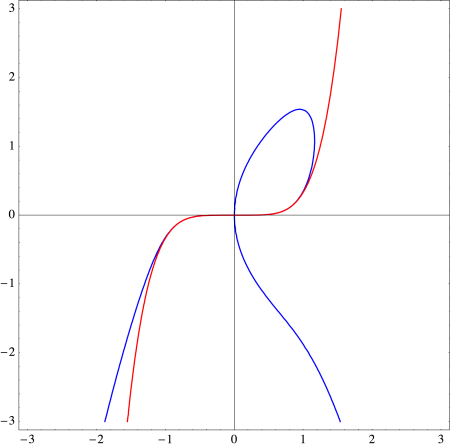

But notice that — just look at the above identities and compare. We remark that if

is restricted to the interval

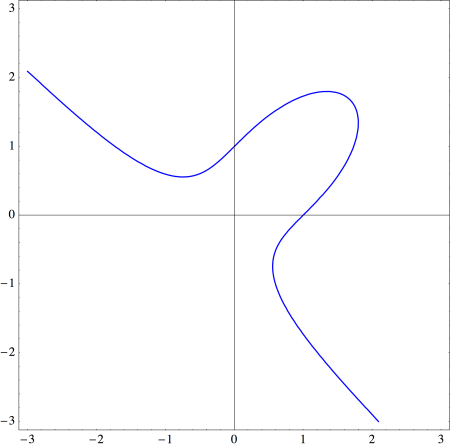

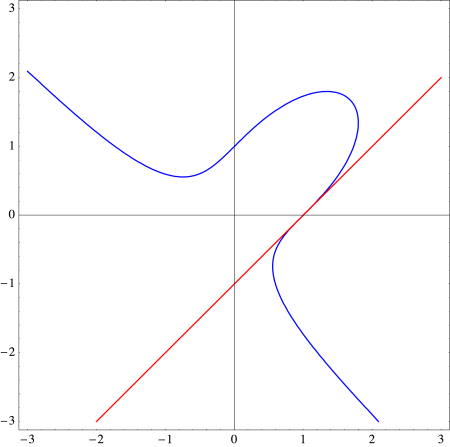

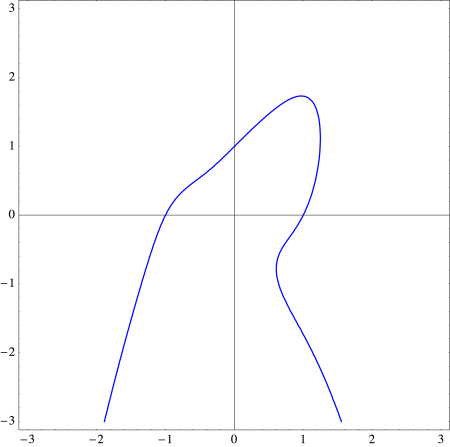

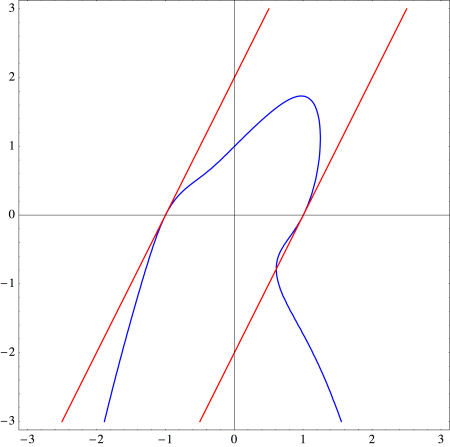

then as a result of the asymptotic behavior, the substitution

gives a bijection between the graphs of

and

and between the graphs of

and

In this case, the signs are always correct —

and

always have the same sign.

So this means that

What could be simpler?

Thus, our integral becomes

But

Thus,

Voila!

We note that if is restricted to the interval

as discussed above, then we always have

so there is no need to put the argument of the logarithm in absolute values.

Well, I’ve done my best to convince you of the wonder of hyperbolic trigonometric substitutions! If integrating didn’t do it, well, that’s the best I’ve got.

The next installment of hyperbolic trigonometry? The Gudermannian function! What’s that, you ask? You’ll have to wait until next time — or I suppose you can just google it….

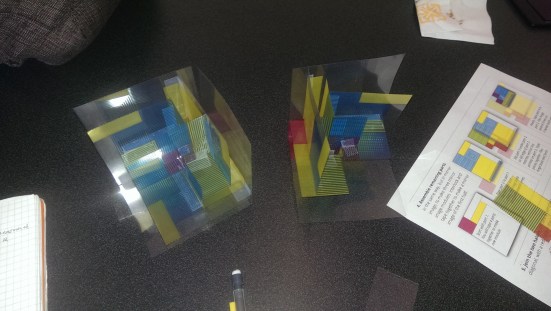

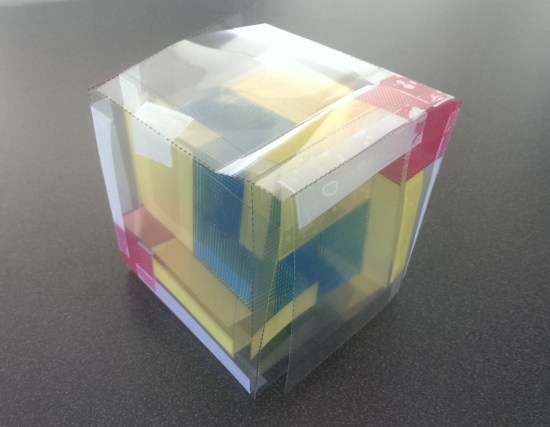

Look carefully, and you’ll see that no single edge in any of these dissections exactly matches any other. For these decompositions, Scott has proved they are minimal — so, for example, there is no motley dissection of one pentagon to ten or fewer. The proofs are not exactly elegant, but they serve their purpose. He also mentioned that he credits Donald Knuth with the term motley dissection, who used the term in a phone conversation not all that long ago.

Look carefully, and you’ll see that no single edge in any of these dissections exactly matches any other. For these decompositions, Scott has proved they are minimal — so, for example, there is no motley dissection of one pentagon to ten or fewer. The proofs are not exactly elegant, but they serve their purpose. He also mentioned that he credits Donald Knuth with the term motley dissection, who used the term in a phone conversation not all that long ago.