Last week’s post on the Geometry of Polynomials generated a lot of interest from folks who are interested in or teach calculus. So I thought I’d start a thread about other ideas related to teaching calculus.

This idea is certainly not new. But I think it is sorely underexploited in the calculus classroom. I like it because it reinforces the idea of derivative as linear approximation.

The main idea is to rewrite

as

with the note that this approximation is valid when Writing the limit in this way, we see that

as a function of

is linear in

in the sense of the limit in the definition actually existing — meaning there is a good linear approximation to

at

Moreover, in this sense, if

then it must be the case that This is not difficult to prove.

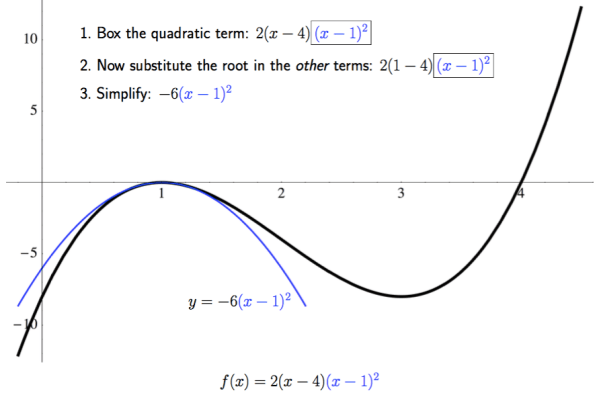

Let’s look at a simple example, like finding the derivative of It’s easy to see that

So it’s easy to read off the derivative: ignore higher-order terms in and then look at the coefficient of

as a function of

Note that this is perfectly rigorous. It should be clear that ignoring higher-order terms in is fine since when taking the limit as in the definition, only one

divides out, meaning those terms contribute

to the limit. So the coefficient of

will be the only term to survive the limit process.

Also note that this is nothing more than a rearrangement of the algebra necessary to compute the derivative using the usual definition. I just find it is more intuitive, and less cumbersome notationally. But every step taken can be justified rigorously.

Moreover, this method is the one commonly used in more advanced mathematics, where functions take vectors as input. So if

we compute

and read off

I don’t want to go into more details here, since such calculations don’t occur in beginning calculus courses. I just want to point out that this way of computing derivatives is in fact a natural one, but one which you don’t usually encounter until graduate-level courses.

Let’s take a look at another example: the derivative of and see how it looks using this rewrite. We first write

Now replace all functions of with their linear approximations. Since

and

near

we have

This immediately gives that is the derivative of

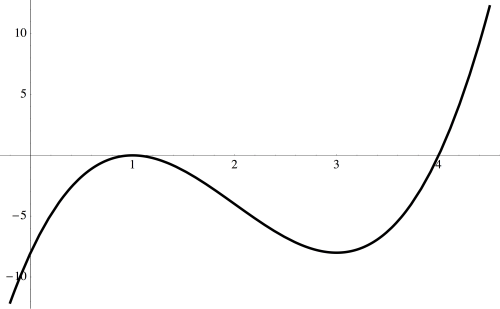

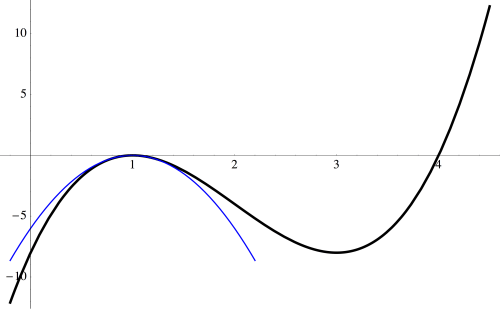

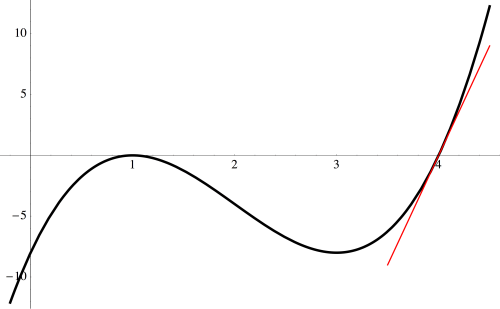

Now the approximation is easy to justify geometrically by looking at the graph of

But how do we justify the approximation

?

Of course there is no getting around this. The limit

is the one difficult calculation in computing the derivative of So then you’ve got to provide your favorite proof of this limit, and then move on. But this approximation helps to illustrate the essential point: the differentiability of

at

does, in a real sense, imply the differentiability of

everywhere else.

So computing derivatives in this way doesn’t save any of the hard work, but I think it makes the work a bit more transparent. And as we continually replace functions of with their linear approximations, this aspect of the derivative is regularly being emphasized.

How would we use this technique to differentiate ? We need

and so

Since the coefficient of on the left is

so must be the coefficient on the right, so that

As a last example for this week, consider taking the derivative of Then we have

Now since and

we have

and so we can replace to get

Now what do we do? Since we’re considering near

then

is small (as small as we like), and so we can consider

as the sum of the infinite geometric series

Replacing, with the linear approximation to this sum, we get

and so

This give the derivative of to be

Neat!

Now this method takes a bit more work than just using the quotient rule (as usually done). But using the quotient rule is a purely mechanical process; this way, we are constantly thinking, “How do I replace this expression with a good linear approximation?” Perhaps more is learned this way?

There are more interesting examples using this geometric series idea. We’ll look at a few more next time, and then use this idea to prove the product, quotient, and chain rules. Until then!