The last post in this series will address how I used Processing in the classroom this past semester. Although my experience has been limited so far, the response has been great!

In the Spring 2016 semester, I used Processing in my Linear Algebra and Probability course, which is a four-credit course specifically for CS majors. The idea is to learn linear algebra first from a geometrical perspective, and then say that a matrix is simply a representation of a geometrical transformation.

I make it a point to generalize and include affine transformations as well, as they are the building blocks for creating fractals using iterated function systems (IFS). The more intuition students have about affine transformations, the easier it is for them to work with IFS. (To learn more about IFS, see Day034, Day035, and Day036 of my blog.)

If you’ve noticed, many of the movies I’ve discussed deal with IFS. Within the first three weeks of the class (here is the link to the course website), I assign a project to create a single fractal image using an IFS. I use the Sage platform as it supports Python and is open source, and all of my students were supposed to have taken a Python course already. All links, prompts, and handouts for this project may be found on Days 5 and 6 of the course website.

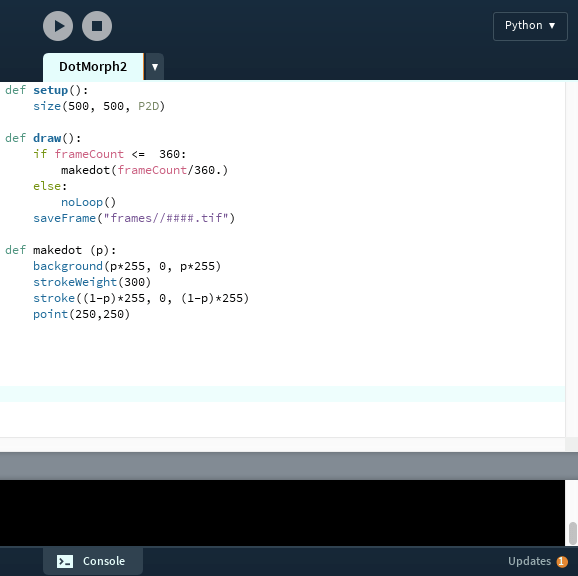

This sets up the class for a Processing project later on in the semester. The timing is not critical; as it turns out the project was due during the Probability section of the course (the last third) as most students had other large projects due a bit earlier. A sample movie provided to the students may be found on Day 22 of the course website, and the project prompt may be found on Day 33.

The basic idea was to use a parameter to vary the numbers in a series of affine transformations used to create a fractal using an IFS. As the parameter varied, the fractal image varied as well. This allowed for an animation from an initial fractal image to a final fractal image.

My grading rubric was fairly simple: each feature a student added beyond my bare-bones movie bumped their grade up. Roughly speaking, four additional features resulted in an A for the assignment. These might include use of color, background image, music, text, etc.

I was inspired by how eagerly students took on this assignment! They really got into it. They appreciated using mathematics — specifically linear algebra — in a hands-on application. I do feel that it is important for CS majors to understand mathematics from an applied viewpoint as well as a theoretical one. Very few CS majors will go on to become theoretical computer scientists.

We did take some class time to watch movies which students uploaded to my Google drive. All had their interesting features, but some were particularly creative. Let’s take a look at a few of them.

Here is Monica’s description of her movie:

My fractal movie consists of a Phoenix, morphing into a shell, morphing into the yin-yang symbol, and then morphing apart. I chose my background color to be pure black to create contrast between the orange and yellow colors of my fractal. The top fractal, which starts out as yellow, shifts downward the whole time. On the other hand, the bottom fractal, which starts out orange, shifts upward. In both cases, a small number of the points from each fractal get stuck in the opposite fractal and begin to shift with it. This leaves the two fractals at the end of the movie intertwined. I created text at the bottom of the fractal movie, which says “Phoenix.” I wanted to enhance the overall movie and give it a name. Lastly, I added music to my fractal movie. I picked the song “Sweet Caroline” by Neil Diamond.

Ethan says this about his movie:

The inspiration for this fractal came from a process of trial and error. I knew I wanted to have symmetry and bright color, but everything else was undecided. After creating the shape of the fractal, I decided to create a complete copy of the fractal and rotate one copy on top of the other. After seeing what it looked like with a simple rotation, I decided something was missing so I had the copied image rotate and either shrink or grow, depending on a random variable. In this movie the image shrinks. I used transitional gradients because I wanted to add more color without it looking too busy or cluttered.

Finally, this is how Mohamed describes his video:

This video starts as a set of four squares scaled by 0.45, and each square has either x increased by 0.5, y increased by 0.5, both increased by 0.5, or neither increased by 0.5. The grays and blacks as the video starts show the random points plotted as the numbers fed into the function are increasing, while the blues and whites show the points as the numbers fed into the function are decreasing. I chose to do this because we often see growth of functions in videos, but we do not see the regression back to its original form too often….

I was very pleased how creative students got with the project, and how enthusiastic they were about their final videos. I have another project underway where I use Processing — a Mathematics and Digital Art course I’ll be teaching this Fall semester. I’ll be talking about this course soon, so be sure to follow along!