Now that the overall structure of the course is laid out, I’d like to describe the week-by-week sequence of topics. Keep in mind this may change somewhat when I actually teach the course, but the progression will stay essentially the same.

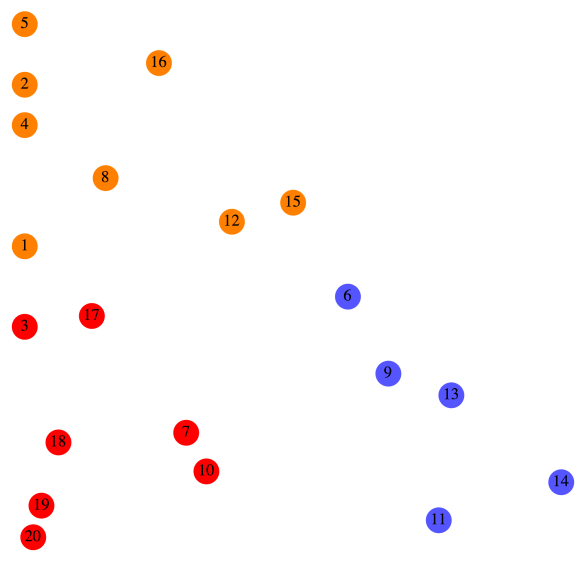

Week 1 is inspired by the work of Josef Albers (which I discuss on Day002 of this blog). Students will be introduced to the CMYK and RGB color spaces, and will begin by creating pieces like this:

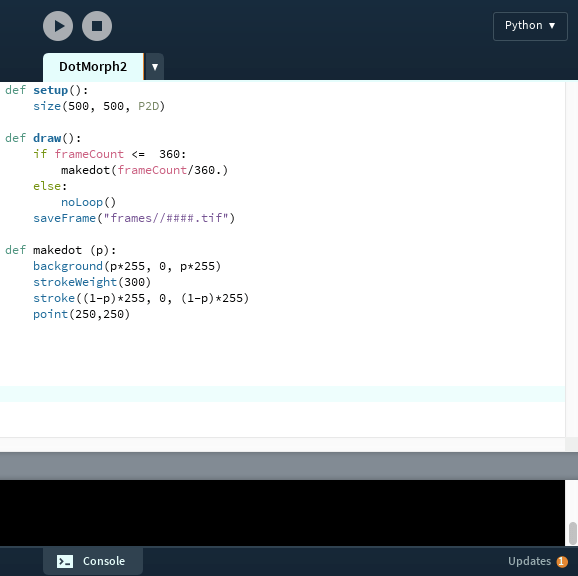

We’ll use Python code in the Sage environment (a basic script will be provided), and learn about the use of random number generation to create pattern and texture. This may be many students’ first exposure to working with code, so we’ll take it slowly. As with many of the topics we’ll discuss, students will be asked to read the relevant blog post before class. While we’ll still have to review in class, the idea is to free up as much class time as possible for exploration in the computer lab.

Week 2 will revolve around creating pieces like Evaporation,

which I discuss on Day011 and Day012. Again, we’ll be in the Sage environment (with a script provided). Here, the ideas to introduce are basic looping constructs in Python, as well as creating a color gradient.

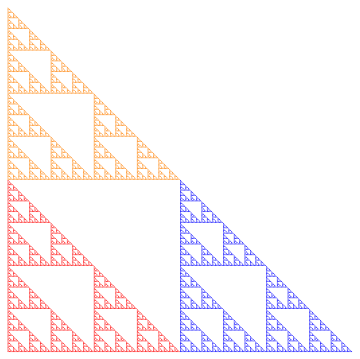

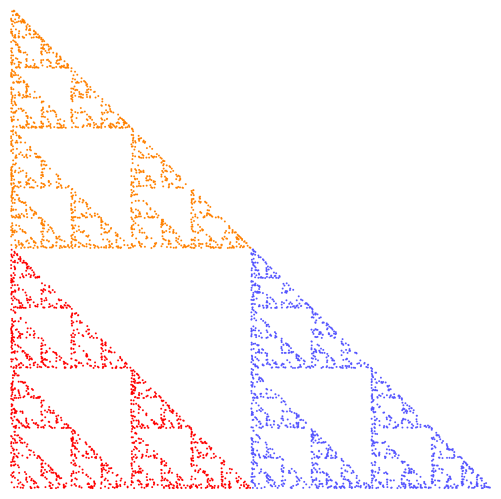

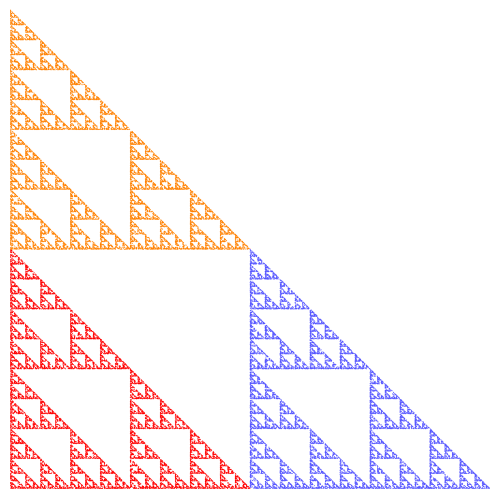

Weeks 3–5 will be all about fractals. This is an ambitious three weeks, so we’ll begin with iterated function systems (IFS), which I discuss extensively on my blog (see Day034, Day035, and Day036 for an introduction).

The important mathematical concept here is affine transformation, which will likely be unfamiliar to most students. Sure, they may understand a matrix as an “array of numbers,” but likely do not see a matrix as a representation of a linear transformation.

But there is such a wealth of fascinating images which can be created using affine transformations in an IFS, I think the effort is worth it. I’ve done something similar with a linear algebra course for computer science majors with some success.

I’ll start with the well-known Sierpinski triangle, and ask students to think about the self-similar nature of this fractal. While the self-similarity may be simple to explain in words, how would you explain it mathematically? This (and similar examples) will be used to motivate the need for affine transformations.

In parallel with this, we’ll look at a Python script for creating an IFS. There is a bit more to this algorithm than the others encountered so far, so we’ll need to look at it carefully, and see where the affine transformations fit in. I’ll create a “dictionary” of affine transformations for the class, so they can see and learn how the entries of a matrix influence the linear/affine transformations.

Having students understand IFS in these three weeks is the highest priority, since they form the basis of our work with Processing later on in the semester. As with any course like this, so much depends on the students who are in the course, and their mathematical background knowledge.

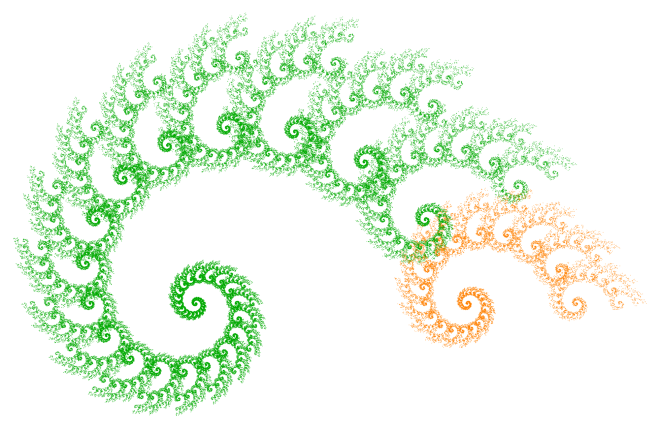

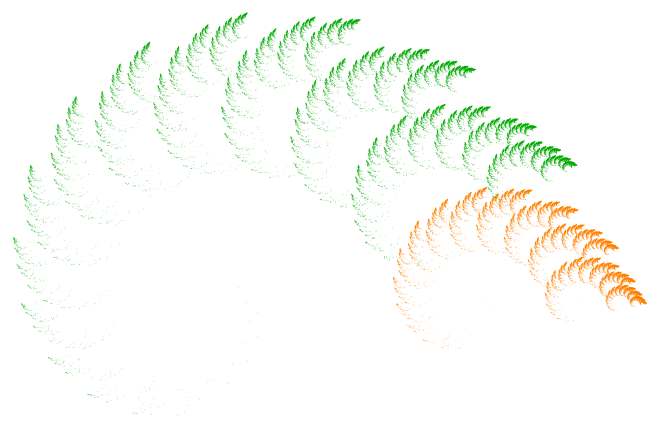

With this being said, it may be that most of these weeks will be devoted to affine transformations and IFS. With whatever time is left over, I’ll be discussing fractal images based on the same algorithm used to produce the Koch curve/snowflake (which I discuss on Day007, Day008, Day009, and Day027).

The initial challenge is to get students to understand a recursive algorithm, which is always a challenging new idea, even for computer science majors. Hopefully the geometric nature of the recursion will help in that regard.

If there is time, we’ll take a brief excursion into number theory. Without going into too many details (see the blog posts mentioned above for more), choosing angles which allow the algorithm to close up and draw a centrally symmetric figure depends on solving a linear diophantine equation like

It turns out that the relevant equation may be solved explicitly, yielding whole families of values which produce intricate images. Here is one I just created last week for a presentation on this topic I’ll be giving at the Symmetry Festival 2016 in Vienna this July:

There is quite a bit of number theory which goes into setting up and solving this equation, but all at the elementary level. We’ll just go as far as we have time to.

Week 6 will be the first in a series of three Presentation Weeks. This week will be devoted to having students select and present a paper or two from the Bridges archive. This archive contains over 1000 papers given at the Bridges conferences since 1998, and is searchable.

The idea is to expose students to the breadth of the relationship between mathematics and art. Because of the need to explain both the mathematics and programming behind the images we’ll create in class, there necessarily will be some sacrifice in the breadth of the course content. Hopefully these brief presentations will remedy this to some extent.

With three 65-minute class periods and 13 students, it shouldn’t be difficult to allow everyone a 10-minute presentation during this week. It is not expected that a student will understand every detail of a particular paper, but at least communicate the main points.

Presentations will be both peer-evaluated and evaluated by me. As these are first-year students, it is understood that they may not have given many presentations of this type before. It is expected that they will improve as the semester progresses.

I realize that some of these ideas are repeated from last week’s post, but I did want to make these two posts covering the week-by-week sequence of topics self-contained. I also wanted to give enough detail so that anyone considering offering a similar course has a clear idea of what I have in mind. Next week, we’ll finish the outline, so stay tuned!

Of course there’s a huge difference! What’s happening is that transformation

Of course there’s a huge difference! What’s happening is that transformation