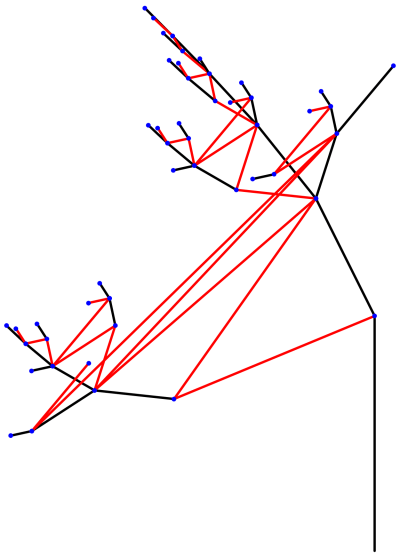

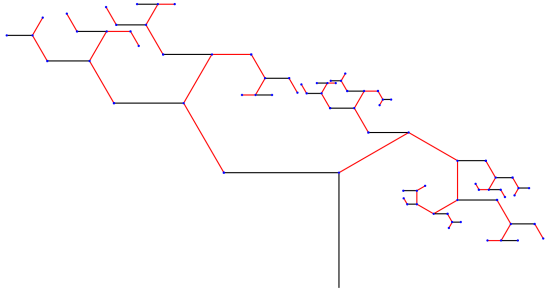

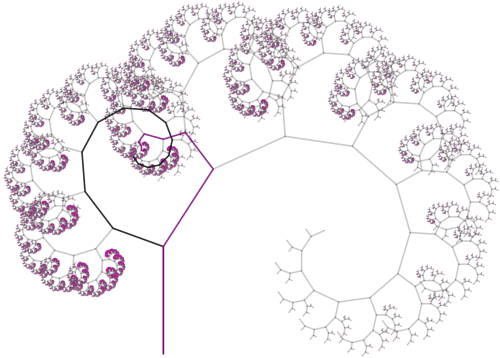

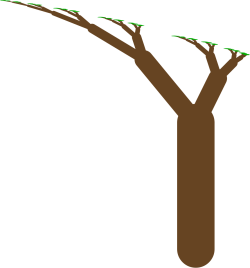

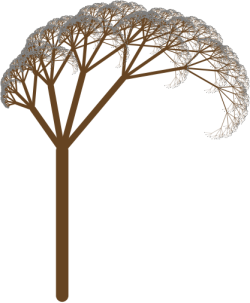

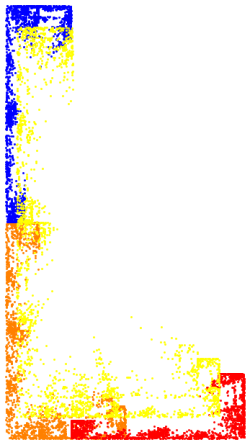

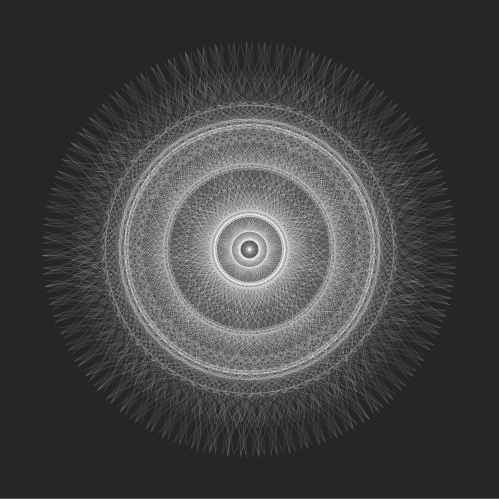

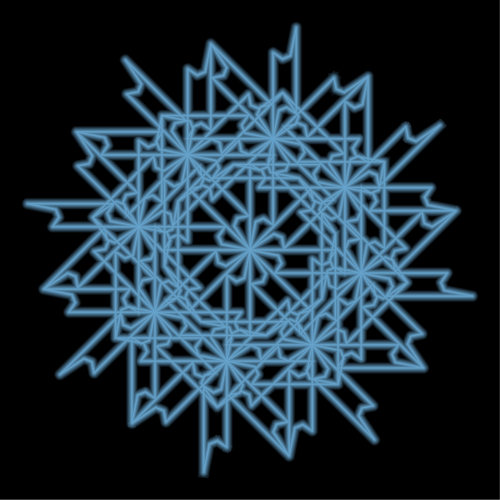

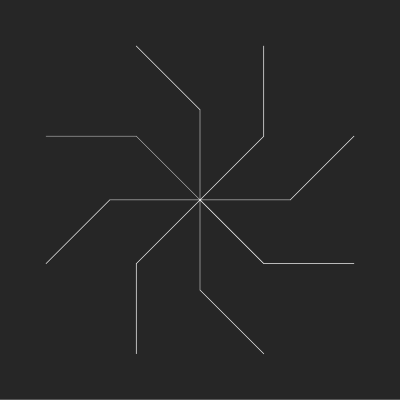

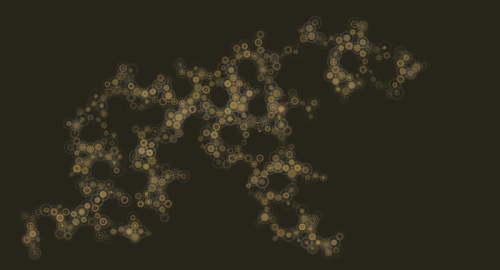

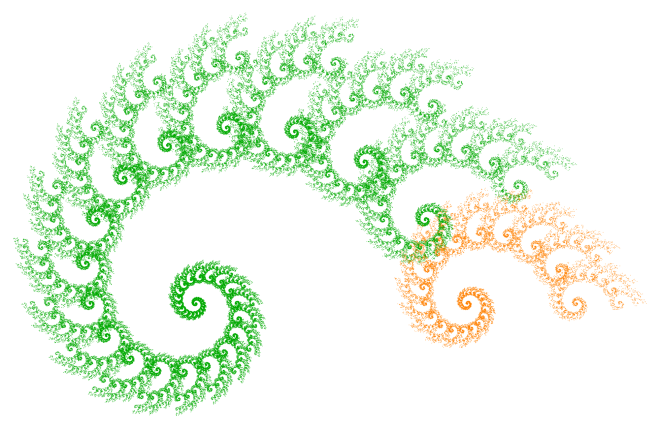

A few weeks, ago I dug out a problem which had puzzled me for over three years. I finally decided that now it was time to really dig in — and to my surprise and delight, not only did I solve the problem, but I’ve already got a draft of a paper written! The figure below is from that paper.

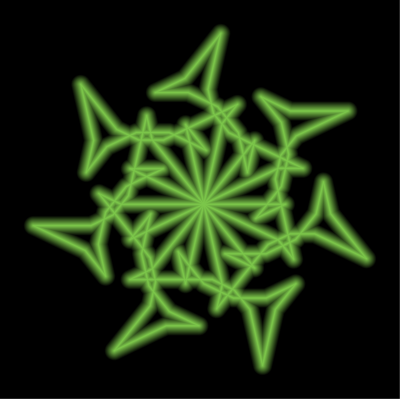

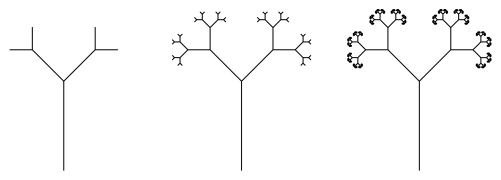

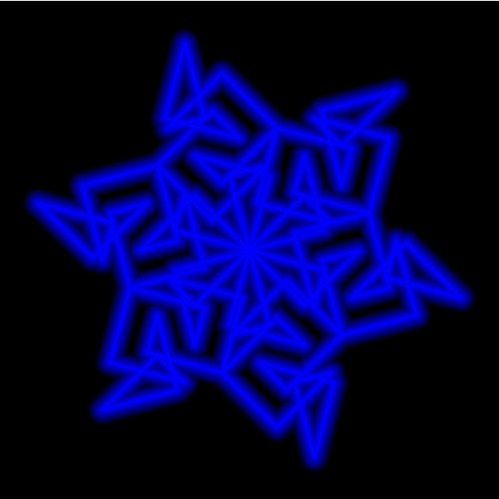

The problem is yet another variation on the recursion which produces the Koch snowflake. I discussed the Koch snowflake in one my first posts, so visit my Day007 post on this fascinating fractal for a refresher.

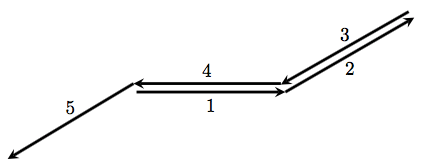

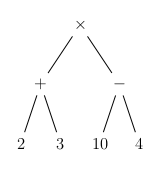

So what is the variation here? Consider the general recursive scheme

where represents a forward move and the

indicate counterclockwise turns, which would be common instructions in a turtle graphics setting.

The Koch curve is generated by choosing

Previously I’ve studied cases where and the resulting image possesses central symmetry, unlike the Koch curve (see my Day008 post for examples). But this is my first venture into exploring instances where

Things work a bit differently here….

So let’s investigate the example illustrated above in more detail. The recursive scheme which generates this spiral is given by

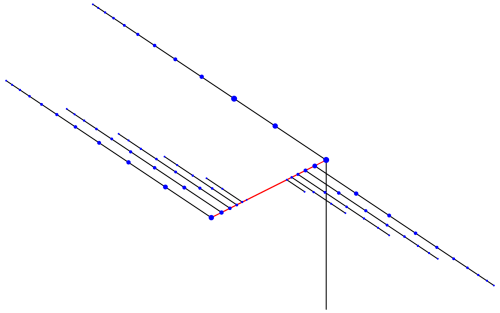

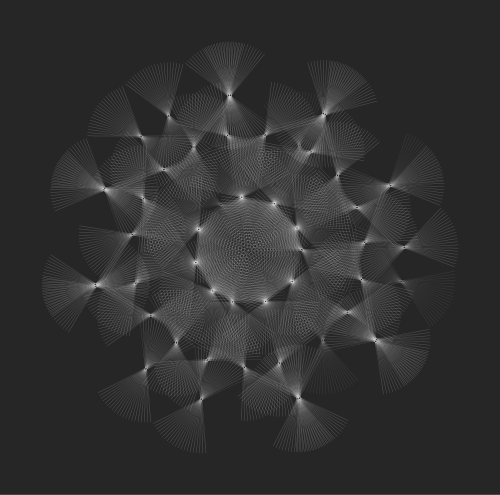

How does this scheme draw a spiral arm? Let’s look at the figure below.

We begin at the origin, and then draw a segment at labeled “1” in the figure. Next, we turn counterclockwise

(since

) and draw the segment labeled “2”.

The next turn is so we turn completely around and begin retracing the arm in the opposite direction. Then turn counterclockwise

and move forward. Note that since

this has the effect of taking us right back to the origin.

What next? It is at this point that we invoke a recursive call — and so the next angle tells us what direction the next arm will be drawn in. Of course, like before, the three angles after that will continue drawing the arm and then bring us back to the origin, awaiting the next recursive call.

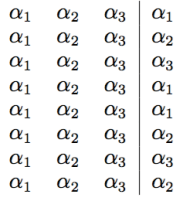

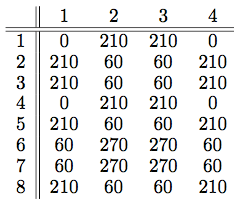

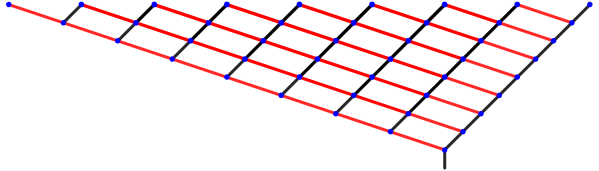

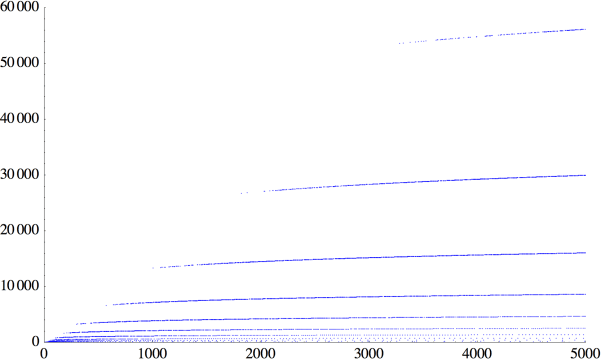

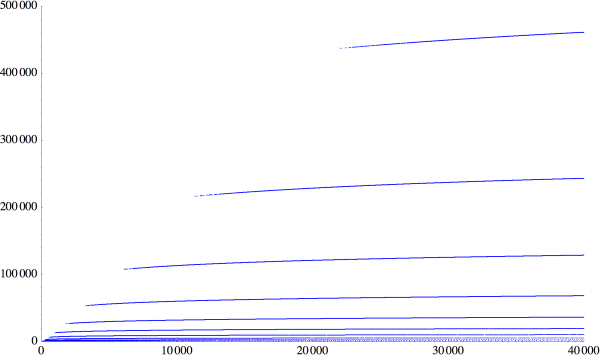

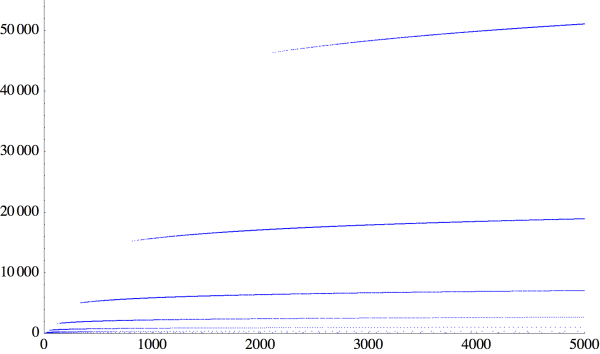

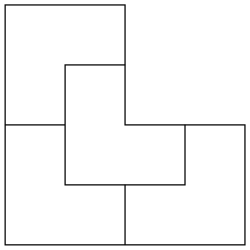

This behavior can be illustrated in the following chart.

Here, the angles turned are listed in rows of four, one after another. The first three angles in each row are the same, and their job is to complete the arm once a direction is chosen. But the direction chosen is determined by the fourth column (separated by the divider), whose behavior is not periodic and highly recursive.

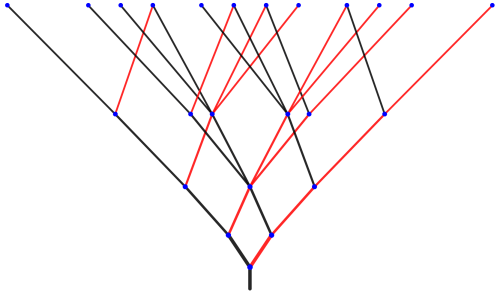

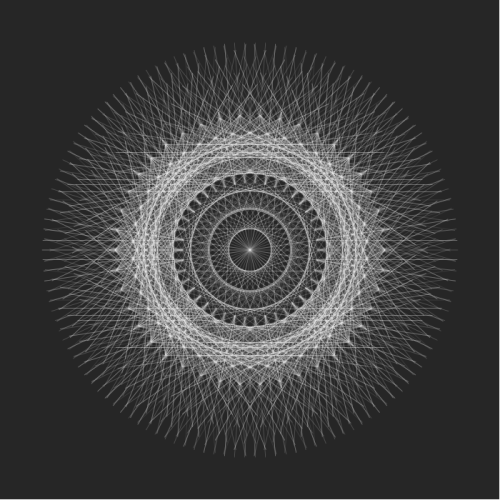

So where do we go from here? The next chart we’ll look it is a chart of the directions the arms are drawn in.

We read this chart in rows as follows: the first arm is drawn at an angle of directly to the right. The second arm is drawn at an angle of

as can be seen in the figure above.

But the third arm exactly retraces the second arm drawn, since it is pointed in the direction of as well. The fourth arm drawn — at an angle of

— exactly retraces the first arm drawn.

So you can see what’s going on. One important consequence of the recursive algorithm is that the arms keep being retraced over and over again.

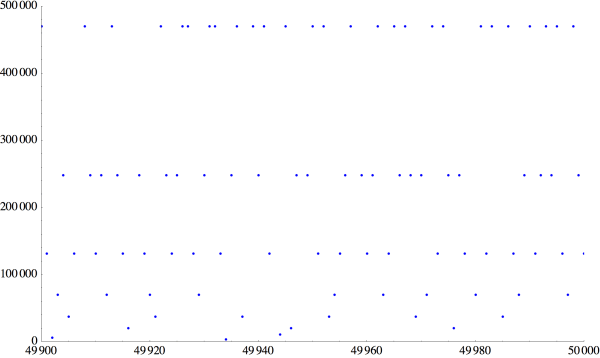

When will the complete spiral be drawn? Well, we need to see every multiple of in the chart above. Of course eight rows isn’t enough. But what is really surprising is that it takes over 1,000,000 rows before every multiple of 30 is encountered!

How would you show this? We can’t go into all the details here. The important observation is that if you read across the chart one row at a time, you get the same sequence of angles by reading down the first column of the chart.

But it isn’t enough to just observe this — it has to be proved. This is where some elementary number theory comes in. No more than what might be seen in an undergraduate number theory course, but beyond the scope of this post.

Slight step up on my soapbox — while I usually deplore the definition of mathematics as the “science of finding patterns” (as this only scratches the surface), in this case, finding patterns is critically important. With some trial and error, you hit upon making charts in rows of four, and then putting the directions the arms are drawn in rows of four, and then stare at the numbers until you notice patterns in the charts. The trick is knowing what to prove — once stated properly, the results almost prove themselves.

You’ll have to wait for the paper to come out for all the details. But here is a brief summary. Suppose you divide the circle into n parts, where n is even. Then devise a recursive scheme using the turning angles

Now define

When n is divisible by 4, the spiral has n arms, and you need to draw exactly segments to render the entire spiral. In our case, with 12 arms, it takes

segments to render the spiral.

But when n is even but not divisible by four, the spiral only has n/2 arms, and it takes segments to draw a complete image of the spiral.

Absolutely amazing, in my opinion. I formulated these conjectures over three years ago, but got stuck. A few weeks ago I had the house to myself for a while, and I just sat down and said to myself, “Look, you’ve already written one paper on these recursions. You can do this.”

And within two days, I worked out the patterns. Then the proofs, and within a week, a draft of the paper.

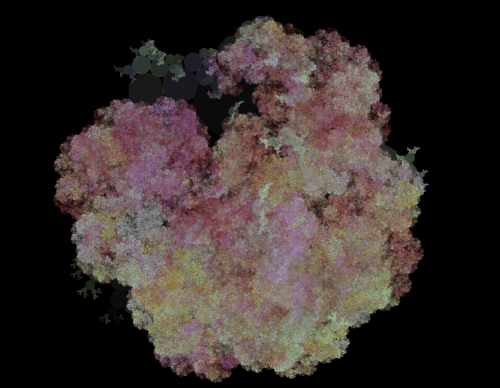

I am always humbled by such a seemingly innocuous problem — generating a simple spiral . But there are so many levels to this problem, and so much interesting mathematics to be discovered. I’ll continue exploring recursively drawn images, and share the amazing results with you when I find them!

There’s so much you can do with just one pair of transformations!

There’s so much you can do with just one pair of transformations!